SB9

Table of Contents

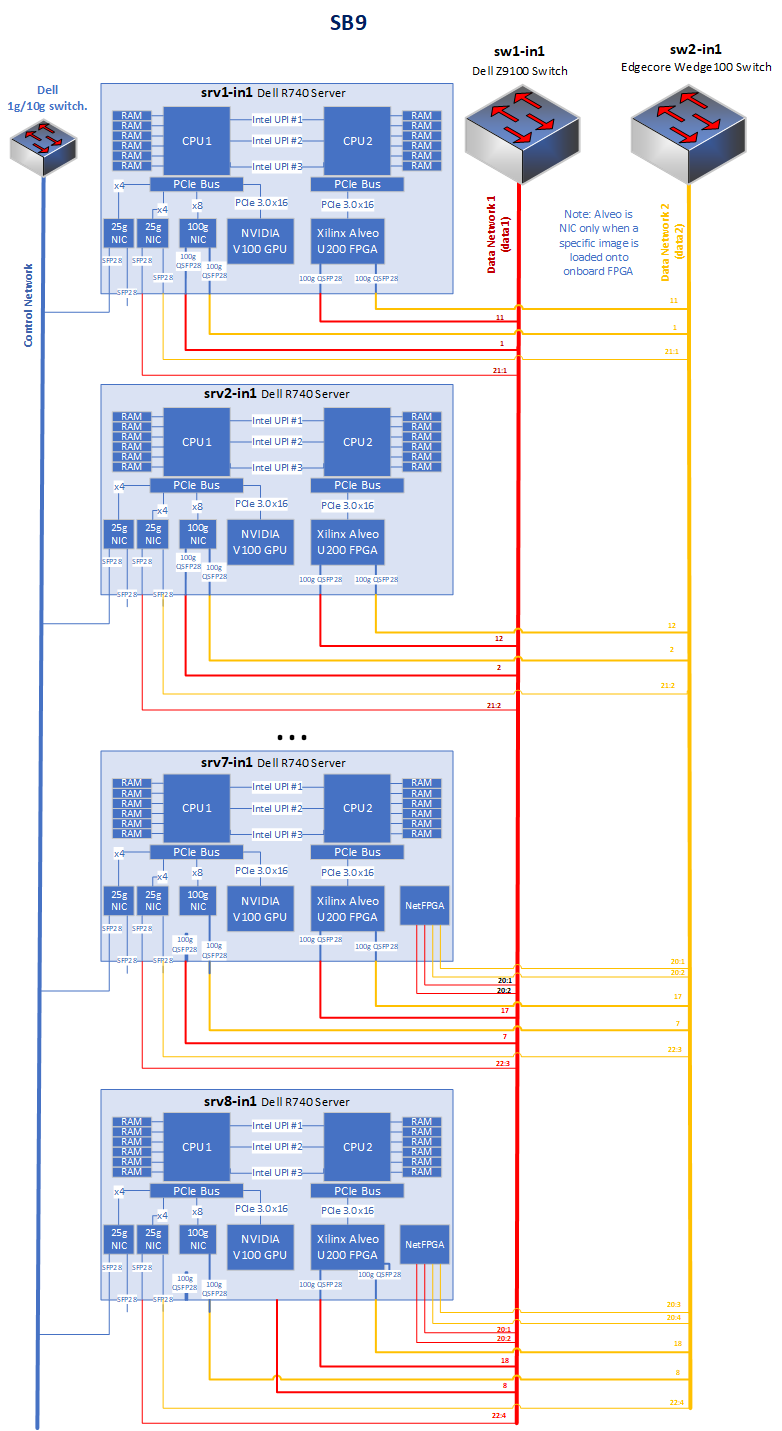

SB9 is dedicated to wired networking and cloud computing experimentation. Nodes in sandbox 9 don't have wireless interfaces.

Sandbox 9 consists of 8 servers, each with the following specifications:

- Server model: Dell R740XD

- CPU: 2x Intel Xeon Gold 6126

- Memory: 192GB

- Disk: 256GB SSD

- Network: 2x 25Gb Mellanox ConnectX-4 Lx

- Network: 2x 100Gb Mellanox ConnectX-5 Lx

- GPU Accelerator: Nvidia V100 16GB

- FPGA Accelerator 1: Xilinx Alveo U200 FPGA (2x 100Gb ethernet)

In Addition, some nodes have additional accelerators or network cards as follows:

- node1-5 and node1-6

- Xilinx Alveo SN1022 SmartNIC (2x 100Gb ethernet)

- node1-7 and node1-8

- NetFPGA 10G (4x 10Gb ethernet)

They are connected to two switches:

- Dell Z9332F-ON

- Ports: 32x 100Gb ports, each can break out to 2x 50Gb, 2x 40Gb, 4x 25Gb, or 4x 10Gb ports.

- OS: Dell OS 10

- Management: In band, out of band, or via onboard BMC.

- Edgecore Wedge100BF-32x

- Ports: 32x 100Gb ports, each can break out to 2x 50Gb, 2x 40Gb, 4x 25Gb, or 4x 10Gb ports.

- OS: Azure SONIC operating system, or OpenNetworkLinux

- Management: In band, out of band, or via onboard BMC.

Figure 1: SB9 Block Diagram

Data Switch Connections

Dell switch connections:

Switch Port Connection Speed 1/1/1:1 node1-1 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/2:1 node1-2 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/3:1 node1-3 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/4:1 node1-4 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/5:1 node1-5 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/6:1 node1-6 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/7:1 node1-7 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/8:1 node1-8 Mellanox ConnectX-5 Ex 100G Port 1 100G 1/1/9:1 node1-5 Xilinx Alveo SN1022 Port 1 100G 1/1/10:1 node1-6 Xilinx Alveo SN1022 Port 1 100G 1/1/11:1 node1-1 Xilinx Alveo U200 Port 1 100G 1/1/12:1 node1-2 Xilinx Alveo U200 Port 1 100G 1/1/13:1 node1-3 Xilinx Alveo U200 Port 1 100G 1/1/14:1 node1-4 Xilinx Alveo U200 Port 1 100G 1/1/15:1 node1-5 Xilinx Alveo U200 Port 1 100G 1/1/16:1 node1-6 Xilinx Alveo U200 Port 1 100G 1/1/17:1 node1-7 Xilinx Alveo U200 Port 1 100G 1/1/18:1 node1-8 Xilinx Alveo U200 Port 1 100G 1/1/19:1 - - - - - - - - 1/1/20:1 node1-7 NetFPGA 10G Port 1 10G 1/1/20:2 node1-7 NetFPGA 10G Port 2 10G 1/1/20:3 node1-8 NetFPGA 10G Port 1 10G 1/1/20:4 node1-8 NetFPGA 10G Port 2 10G 1/1/21:1 node1-1 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/21:2 node1-2 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/21:3 node1-3 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/21:4 node1-4 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/22:1 node1-5 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/22:2 node1-6 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/22:3 node1-7 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/22:4 node1-8 Mellanox ConnectX-4 Lx 25G Port 1 25G 1/1/23:1 - - - - - - - - 1/1/24:1 - - - - - - - - 1/1/25:1 - - - - - - - - 1/1/26:1 - - - - - - - - 1/1/27:1 - - - - - - - - 1/1/28:1 - - - - - - - - 1/1/29:1 - - - - - - - - 1/1/30:1 - - - - - - - - 1/1/31:1 Uplink - Fiber F3 100G 1/1/32:1 Uplink - Fiber F4 100G

Edgecore switch connections:

Switch Port Connection Speed 1/1/1:1 node1-1 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/2:1 node1-2 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/3:1 node1-3 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/4:1 node1-4 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/5:1 node1-5 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/6:1 node1-6 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/7:1 node1-7 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/8:1 node1-8 Mellanox ConnectX-5 Ex 100G Port 2 100G 1/1/9:1 node1-5 Xilinx Alveo SN1022 Port 2 100G 1/1/10:1 node1-6 Xilinx Alveo SN1022 Port 2 100G 1/1/11:1 node1-1 Xilinx Alveo U200 Port 2 100G 1/1/12:1 node1-2 Xilinx Alveo U200 Port 2 100G 1/1/13:1 node1-3 Xilinx Alveo U200 Port 2 100G 1/1/14:1 node1-4 Xilinx Alveo U200 Port 2 100G 1/1/15:1 node1-5 Xilinx Alveo U200 Port 2 100G 1/1/16:1 node1-6 Xilinx Alveo U200 Port 2 100G 1/1/17:1 node1-7 Xilinx Alveo U200 Port 2 100G 1/1/18:1 node1-8 Xilinx Alveo U200 Port 2 100G 1/1/19:1 - - - - - - - - 1/1/20:1 node1-7 NetFPGA 10G Port 3 10G 1/1/20:2 node1-7 NetFPGA 10G Port 4 10G 1/1/20:3 node1-8 NetFPGA 10G Port 3 10G 1/1/20:4 node1-8 NetFPGA 10G Port 4 10G 1/1/21:1 node1-1 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/21:2 node1-2 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/21:3 node1-3 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/21:4 node1-4 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/22:1 node1-5 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/22:2 node1-6 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/22:3 node1-7 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/22:4 node1-8 Mellanox ConnectX-4 Lx 25G Port 2 25G 1/1/23:1 - - - - - - - - 1/1/24:1 - - - - - - - - 1/1/25:1 - - - - - - - - 1/1/26:1 - - - - - - - - 1/1/27:1 - - - - - - - - 1/1/28:1 - - - - - - - - 1/1/29:1 - - - - - - - - 1/1/30:1 - - - - - - - - 1/1/31:1 - - - - - - - - 1/1/32:1 Uplink - Fiber F1 100G

Procedures (Edge Core Wedge)

Manual install of new switch os: Currently this must be done by a user with admin access. A service will be provided to automate this operation.

- download image and change symlink on repo1 at path

/tftpboot/os/switch/100bf-32x/current-os - Connect to BMC, and run ONIE following steps at https://github.com/opencomputeproject/OpenNetworkLinux/blob/master/docs/GettingStartedWedge.md

- For SONIC, follow https://github.com/Azure/SONiC/wiki/Quick-Start

- Set up mapping of serial lanes to ports

Last modified

3 years ago

Last modified on Dec 20, 2022, 11:08:09 PM

Attachments (1)

-

SB9 BD.png

(85.2 KB

) - added by 5 years ago.

Sandbox 9 Block Diagram

Download all attachments as: .zip

Note:

See TracWiki

for help on using the wiki.